Neural Style Transfer | Vibepedia

Neural Style Transfer (NST) is a fascinating application of deep learning that allows users to reimagine an image in the artistic style of another. This…

Contents

Overview

The genesis of Neural Style Transfer can be traced back to early explorations in computer vision and neural networks, particularly the understanding of how convolutional neural networks (CNNs) process visual information. While early work by Yann LeCun and others laid the groundwork for CNNs in the 1990s, it was the breakthrough paper "A Neural Algorithm of Artistic Style" by Leon A. Gatys, Alexander Eige, and Matthias Bethge, published in 2015, that truly ignited the field of NST. This seminal work demonstrated how to extract and recombine content and style representations from images using pre-trained CNNs like VGGNet-19. Prior to this, artistic image manipulation was largely confined to manual techniques or simpler algorithmic filters; Gatys's team showed that deep neural networks could capture and transfer complex artistic aesthetics, moving beyond mere texture synthesis to genuine stylistic interpretation. The research emerged from the academic environment of the University of Tübingen, quickly capturing the imagination of researchers and artists alike.

⚙️ How It Works

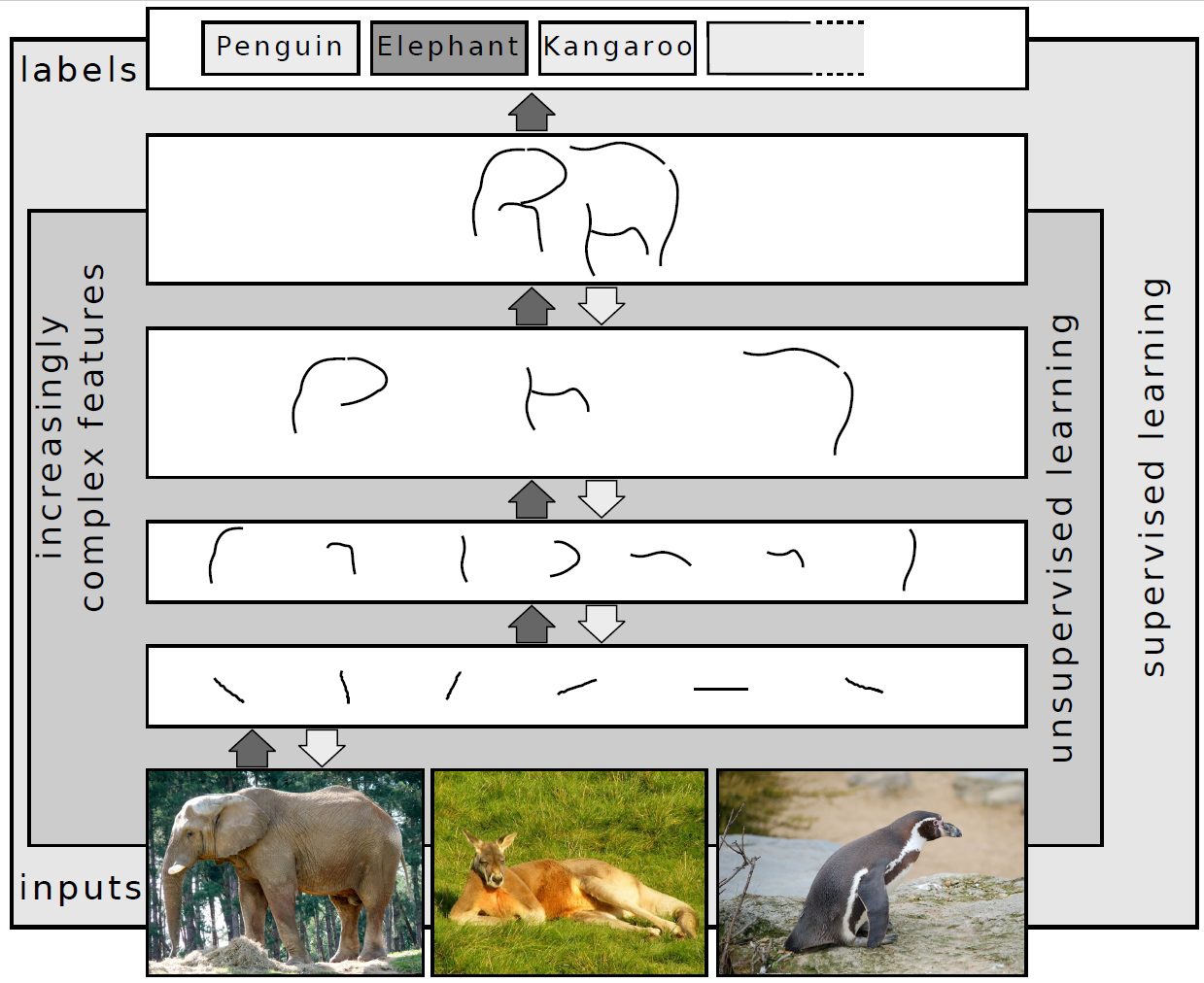

At its core, Neural Style Transfer leverages a pre-trained CNN, often VGGNet-19, which has learned to recognize hierarchical features in images. The process involves three key components: a content image, a style image, and a generated output image. The CNN processes all three images. The "content" of the output image is guided by minimizing the difference between the feature representations of the content image and the output image in the deeper layers of the CNN. Simultaneously, the "style" of the output image is dictated by minimizing the difference between the correlations of feature activations (captured by Gram matrices) in the style image and the output image, typically across multiple layers. This dual optimization process, driven by gradient descent, iteratively refines the output image until it simultaneously resembles the content of the first image and the style of the second. The choice of layers for content and style extraction significantly impacts the final result, with deeper layers capturing more abstract content and shallower layers capturing finer stylistic details like brushstrokes and color palettes.

📊 Key Facts & Numbers

Since its inception, Neural Style Transfer has seen remarkable advancements in efficiency. Early implementations required hours of computation on powerful GPUs to generate a single high-resolution image. By 2016, researchers developed fast neural style transfer algorithms that could generate stylized images in near real-time, reducing processing times to mere milliseconds on modern hardware. This acceleration was achieved through training a separate feed-forward network to perform the style transfer, rather than relying on iterative optimization for each new image. The computational cost per stylized image has plummeted significantly since the initial research.

👥 Key People & Organizations

Major tech companies like Google and Meta Platforms have invested heavily in deep learning research, with their AI labs exploring and implementing NST-like technologies in various products. Open-source communities on platforms like GitHub have also been instrumental, with numerous developers contributing code and pre-trained models, fostering widespread adoption and experimentation.

🌍 Cultural Impact & Influence

Neural Style Transfer has profoundly impacted digital art and creative expression, democratizing artistic creation. It has reportedly enabled individuals without traditional artistic training to generate visually striking images, blending personal photos with the styles of masters like Vincent van Gogh, Claude Monet, or Pablo Picasso. The technology has permeated social media platforms, with stylized selfies and artistic photo filters becoming commonplace. Beyond personal use, NST has found its way into graphic design, advertising, and even film production for generating unique visual assets or concept art. The ease with which complex artistic styles can be replicated has also sparked discussions within the art world about originality, authorship, and the role of the artist in an increasingly automated creative landscape. Its influence can be seen in the proliferation of AI-generated art tools, many of which build upon the foundational principles established by NST.

⚡ Current State & Latest Developments

The current state of Neural Style Transfer is characterized by increasing sophistication and accessibility. Researchers are developing techniques for video style transfer that maintain temporal consistency, preventing flickering and ensuring a smooth visual experience. New architectures and loss functions are constantly being explored to achieve finer control over style and content, allowing for more nuanced artistic results. For instance, techniques like "style mixing" enable the combination of multiple styles, and "content-aware" style transfer aims to preserve more of the original image's structure. The integration of NST with other generative AI models, such as Generative Adversarial Networks (GANs), is also a major trend, leading to more complex and photorealistic artistic outputs. The development of more efficient models, like those based on Transformers, is further accelerating progress.

🤔 Controversies & Debates

Neural Style Transfer is not without its controversies. A primary debate centers on authorship and originality: when an AI generates art in the style of a human artist, who is the true creator? This question becomes particularly acute when the AI is trained on copyrighted works without explicit permission, raising legal and ethical concerns about intellectual property. Critics argue that NST can devalue the skill and labor of human artists by making complex stylistic imitation easily achievable. Furthermore, the potential for misuse, such as creating deepfakes or generating propaganda in a recognizable artistic style, presents significant ethical challenges. The debate also touches upon the definition of art itself – is an algorithmically generated image truly art, or merely a sophisticated imitation? The controversy score for NST is high, reflecting ongoing debates about its creative, ethical, and legal implications.

🔮 Future Outlook & Predictions

The future of Neural Style Transfer appears to be one of deeper integration and enhanced control. We can expect to see more sophisticated video stylization techniques that are indistinguishable from human-directed artistic choices. The ability to control specific elements of style, such as brushstroke size, color palette intensity, or even the emotional tone of an artwork, will likely become more granular. Furthermore, NST is poised to become a standard tool within broader creative workflows, seamlessly integrated into professional design software and even virtual reality environments. The development of personalized style models, trained on an individual's own artwork or preferences, could lead to highly bespoke artistic outputs. As computational power continues to grow and algorithms become more efficient, the line between human and AI-generated art will likely blur further, prompting new philosophical and artistic discussions about creativity itself.

💡 Practical Applications

Neural Style Transfer has a wide array of practical applications beyond artistic experimentation. In graphic design and advertising, it's used to create unique visual branding and marketing materials.

Key Facts

- Category

- technology

- Type

- topic